The 1000w Beast: Reviewing the Rtx 5090 for Ai Training

I still remember the first time I tried to set up a High-Compute AI RIG for my machine learning projects. The amount of overwhelming information out there is staggering, and it’s easy to get lost in a sea of technical jargon and conflicting opinions. Everyone seems to be peddling their own brand of “revolutionary” AI solutions, but few actually deliver. As someone who’s been in the trenches, I can tell you that it’s time to cut through the hype and get to the heart of what really matters.

In this article, I promise to give you the no-nonsense lowdown on High-Compute AI RIGs. I’ll share my own experiences, both successes and failures, to help you make informed decisions about your own AI setup. We’ll dive into the key considerations you need to keep in mind, from hardware and software to scalability and cost. My goal is to empower you with practical advice that you can actually use, rather than just regurgitating technical specs or marketing buzzwords. By the end of this journey, you’ll be equipped to unleash the full potential of your High-Compute AI RIG and take your projects to the next level.

Table of Contents

- Unlocking High Compute Ai Rigs

- Optimizing High Compute Ai Rigs

- Building Custom Ai Rig Builds With Ai Workload Optimization Techniques

- Implementing Advanced Cooling Solutions for Ai in Scalable Ai Infrastructur

- 5 Crucial Considerations for Maximizing High-Compute AI RIG Potential

- Key Takeaways for High-Compute AI Rigs

- Unlocking the Future of AI

- Conclusion

- Frequently Asked Questions

Unlocking High Compute Ai Rigs

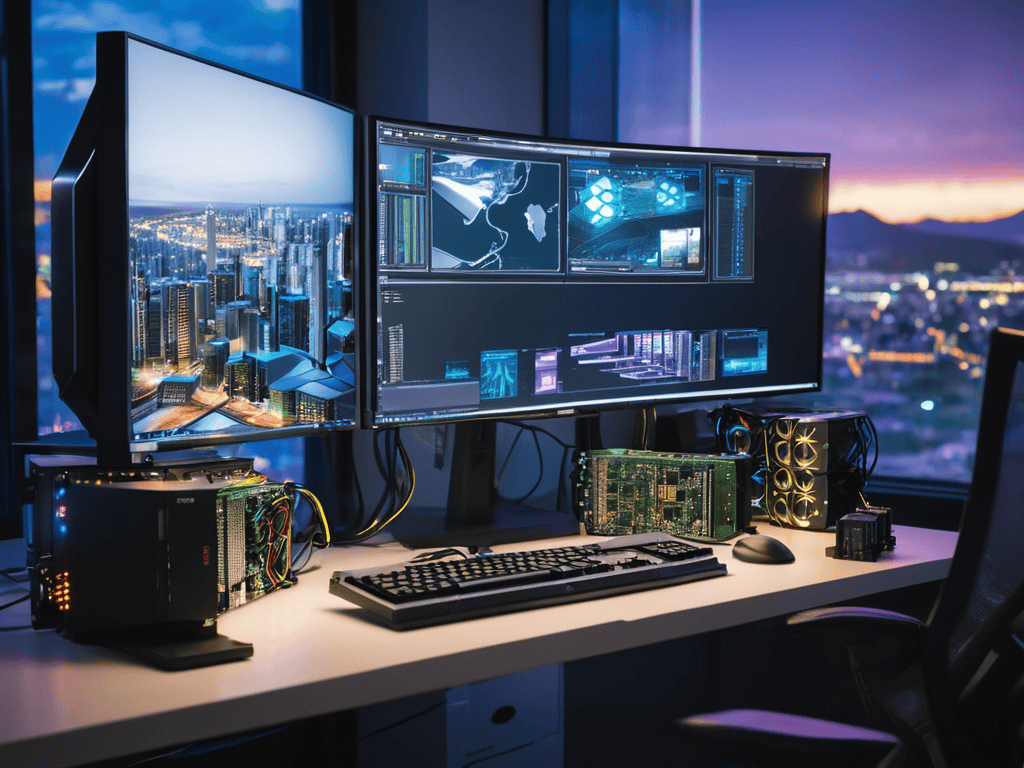

To truly unlock the potential of high-performance computing, you need to understand the intricacies of ai computing performance metrics. This involves delving into the world of custom builds, where high_end_gpu_comparison becomes a crucial factor in determining the overall efficiency of your system. By carefully selecting and configuring your GPUs, you can significantly enhance your workflow and tackle even the most demanding tasks with ease.

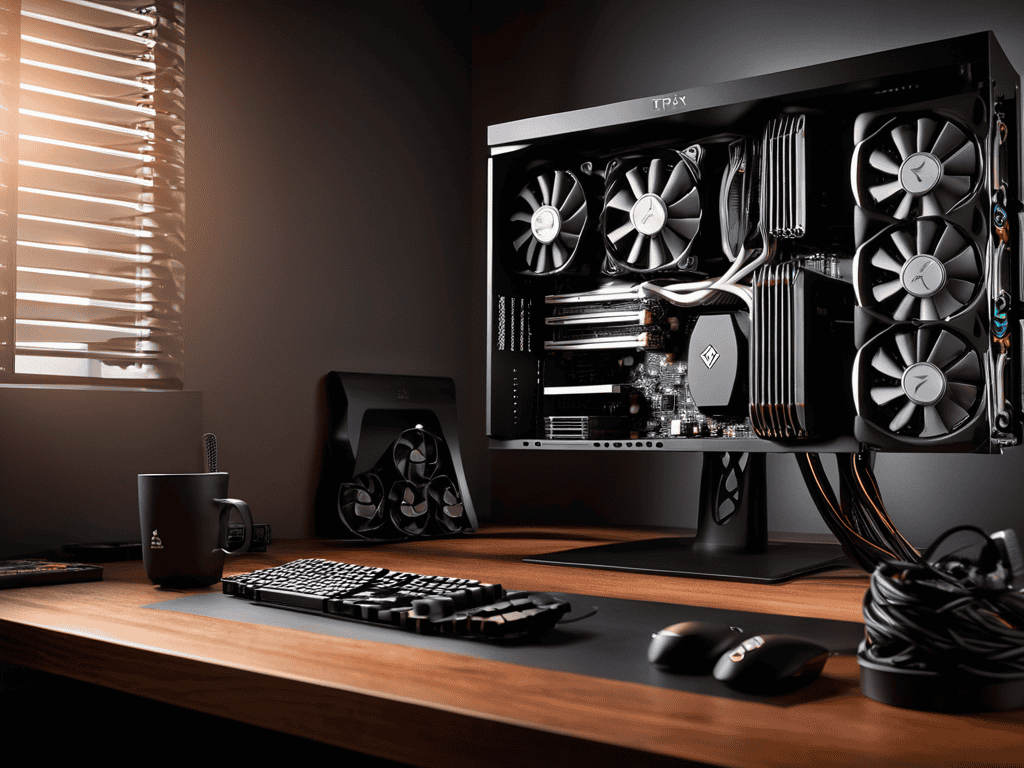

When it comes to building a custom AI rig, ai_workload_optimization_techniques play a vital role in ensuring that your system operates at peak performance. This involves strategically allocating resources, optimizing cooling systems, and implementing advanced_cooling_solutions_for_ai to prevent overheating. By doing so, you can create a robust and reliable system that can handle complex computations without breaking a sweat.

As you delve deeper into the world of high-compute AI rigs, you’ll likely encounter a plethora of complex terminology and technical specifications that can be overwhelming, even for seasoned professionals. To help you navigate this intricate landscape, I highly recommend checking out the wealth of information available on various online forums and communities, such as those dedicated to AI infrastructure design, where you can find valuable insights and expert advice. For instance, if you’re looking for a more relaxed and informal approach to learning, you might stumble upon interesting discussions or blogs, like the ones from trans escorts nz, that, although unrelated to AI, showcase how community-driven platforms can facilitate knowledge sharing and collaboration, a concept that can be applied to the AI community as well, by fostering a sense of cooperation and mutual support among enthusiasts and experts alike.

A well-designed scalable_ai_infrastructure_design is also essential for maximizing the potential of your AI rig. This involves creating a modular system that can be easily upgraded or modified as needed, allowing you to stay ahead of the curve in terms of technology and performance. By focusing on these key areas, you can create a high-performance AI rig that revolutionizes your workflow and takes your productivity to new heights.

Mastering High End Gpu Comparison for Optimal Gains

When it comes to high-performance computing, the right GPU can make all the difference. Comparing different models and their specifications is crucial to ensure you’re getting the best bang for your buck. A thorough high_end_gpu_comparison will help you identify the strengths and weaknesses of each option, allowing you to make an informed decision.

To get the most out of your high-compute AI rig, you need to consider optimal gpu configuration, taking into account factors such as memory, clock speed, and power consumption. By finding the perfect balance between these elements, you can unlock unprecedented levels of computing power and transform your workflow.

Turbocharging Ai Computing Performance Metrics

To truly unleash the power of high-compute AI rigs, it’s essential to focus on optimizing performance metrics. This involves fine-tuning the system to achieve the perfect balance between processing power and energy efficiency. By doing so, users can expect a significant boost in their workflow, allowing them to tackle complex tasks with ease.

The key to turbocharging AI computing lies in the rig’s ability to handle massive amounts of data while maintaining a high level of precision. This is particularly crucial in applications such as machine learning and deep learning, where even the smallest margin of error can have significant consequences.

Optimizing High Compute Ai Rigs

To take your AI computing to the next level, optimizing performance is crucial. This involves understanding ai computing performance metrics and how they impact your workflow. By fine-tuning these metrics, you can unlock significant gains in processing power and efficiency. Whether you’re working with machine learning or deep learning applications, optimizing performance is essential for achieving your goals.

When it comes to building a custom AI rig, high_end_gpu_comparison is a critical factor to consider. By selecting the right GPU for your needs, you can ensure that your rig is capable of handling demanding ai_workload_optimization_techniques. This, in turn, enables you to tackle complex projects with confidence, knowing that your rig can handle the workload. With the right GPU in place, you can focus on pushing the boundaries of what’s possible with AI.

By incorporating advanced_cooling_solutions_for_ai into your custom rig build, you can further enhance performance and reliability. This is particularly important when working with high-performance components that generate significant heat. By keeping your rig cool and stable, you can ensure that it runs at optimal levels, even during intense processing sessions. This attention to detail is what sets a truly scalable_ai_infrastructure_design apart from a standard build.

Building Custom Ai Rig Builds With Ai Workload Optimization Techniques

When it comes to building custom AI rig builds, ai_workload_optimization_techniques play a crucial role in maximizing performance. By carefully selecting and configuring each component, you can create a system that is tailored to your specific needs. This approach allows for a more efficient use of resources, resulting in faster processing times and improved overall productivity.

To take your custom AI rig build to the next level, consider implementing hardware acceleration techniques. This can include the use of specialized GPUs, CPUs, or other components that are designed to handle demanding AI workloads. By leveraging these technologies, you can significantly boost your system’s performance and achieve faster results.

Implementing Advanced Cooling Solutions for Ai in Scalable Ai Infrastructur

When designing scalable AI infrastructure, it’s crucial to consider the thermal implications of high-compute operations. Advanced cooling solutions can make all the difference in maintaining optimal performance and preventing overheating. By incorporating effective cooling systems, you can ensure your AI rig runs smoothly and efficiently, even during intense workloads.

To achieve this, focus on liquid cooling systems, which offer superior heat dissipation compared to traditional air cooling methods. This allows for more compact and scalable designs, enabling you to build high-density AI infrastructure that can handle demanding workloads with ease.

5 Crucial Considerations for Maximizing High-Compute AI RIG Potential

- Assess your specific AI workload requirements to determine the optimal balance of CPU, GPU, and memory resources

- Select the right high-end GPU for your needs by comparing performance benchmarks and considering factors like power consumption and cooling requirements

- Implement a robust cooling system to prevent overheating and ensure reliable operation, even during intense computational workloads

- Consider using liquid cooling or other advanced thermal management techniques to further enhance performance and reduce noise levels

- Regularly monitor and optimize your AI RIG’s performance using tools like GPU stress tests and system monitoring software to identify bottlenecks and areas for improvement

Key Takeaways for High-Compute AI Rigs

High-compute AI rigs can revolutionize your workflow, but it’s crucial to do your homework before investing in one to ensure you’re getting the right hardware for your specific needs

Optimizing your AI rig’s performance involves a combination of mastering high-end GPU comparisons, building custom rigs with AI workload optimization techniques, and implementing advanced cooling solutions for scalable infrastructure design

By turbocharging your AI computing performance metrics and unlocking the full potential of your high-compute AI rig, you can achieve unprecedented levels of efficiency and productivity in your machine learning and deep learning applications

Unlocking the Future of AI

A high-compute AI rig is not just a machine, it’s a key that unlocks the doors of innovation, allowing us to push the boundaries of what’s possible and redefine the landscape of artificial intelligence.

Ethan Wright

Conclusion

As we’ve explored the world of high-compute AI rigs, it’s clear that unlocking their full potential requires a deep understanding of turbocharging AI computing performance metrics and mastering high-end GPU comparisons. By applying these principles and optimizing our rigs with custom builds and advanced cooling solutions, we can unlock unprecedented levels of computational power. Whether you’re a researcher, developer, or entrepreneur, the key to success lies in building custom AI rig builds that are tailored to your specific needs and workloads.

As we look to the future, it’s exciting to think about the possibilities that high-compute AI rigs will enable. From revolutionizing industries to solving complex problems, the potential for innovation is vast. By embracing the power of AI and scalable infrastructure design, we can create a new era of technological advancements that will transform our world. So, let’s unleash the beast and see where the journey takes us – the future of high-compute AI rigs is bright, and it’s waiting for us to explore and innovate.

Frequently Asked Questions

What are the key considerations for choosing the right high-compute AI rig for my specific workflow?

When choosing a high-compute AI rig, consider your specific workflow’s demands – think data size, model complexity, and desired processing speed. When in doubt, prioritize a balance of CPU, GPU, and memory to ensure your rig can handle your unique workload without breaking the bank.

How do I ensure that my high-compute AI rig is properly optimized for power consumption and heat management?

To optimize your high-compute AI rig’s power consumption and heat management, focus on balancing GPU and CPU workloads, and invest in a top-notch cooling system – I’m talking custom liquid cooling or high-quality air cooling solutions. This will help keep your rig running smoothly and prevent overheating.

Can I upgrade or customize my existing hardware to achieve high-compute AI capabilities, or do I need to purchase a brand new system?

Upgrading your existing rig can be a viable option, but it depends on your current hardware. If you’ve got a solid foundation, swapping out your GPU or adding more RAM might do the trick. However, if you’re working with outdated tech, it might be more cost-effective to build or buy a new system optimized for high-compute AI tasks.